-

Friendly reminder: The politics section is a place where a lot of differing opinions are raised. You may not like what you read here but it is someone's opinion. As long as the debate is respectful you are free to debate freely. Also, the views and opinions expressed by forum members may not necessarily reflect those of GBAtemp. Messages that the staff consider offensive or inflammatory may be removed in line with existing forum terms and conditions.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Chat GPT is clearly pandering to the CCP

- Thread starter x65943

- Start date

- Views 1,977

- Replies 9

- Likes 2

I think you missed the EOF with that thread title.I know we all like to talk about how US companies basically capitulate to China, but this is next level

View attachment 374609View attachment 374610View attachment 374611View attachment 374612View attachment 374613

What are your thoughts? Should a US company stifle free speech in America to make 3rd parties happy?

You're asking it things in English and expecting responses in English, those responses have been trained on English data. Obviously, the responses will reflect what the majority of the English-speaking internet thinks. People on the internet aren't necessarily the nicest people, and sometimes the responses reflect that. That necessitates giving hardcoded canned responses for certain prompts, in order to avoid giving responses that could be offensive.

OpenAI worked hard to make sure that ChatGPT won't give offensive or controversial responses, after their earlier attempts with GPT did not go quite as well. You don't have to look further than Bing Chat to see what happens when this effort is not made, it goes completely off the rails on a frequent basis, which you can find plenty of examples of on YouTube and elsewhere, and Microsoft has made some efforts to rein it in, but they haven't been entirely successful.

I could agree that ChatGPT's list of blacklisted topics is a little heavy handed, but then again I don't know what responses it would have given if that blacklist wasn't there, so it might be for the better. I have a strong suspicion though that the blacklist is not based on your query, but based on the response it would give.

It makes far more sense to filter responses based on whether there is 100% something offensive or controversial (to certain people) in the response, than filter based on a query that could result in a potentially offensive or controversial response, without actually generating the response and checking if it indeed is offensive or controversial. You have to generate the response and check it in order to know for sure.

Additionally, some of these things it has probably never seen in the context of ASCII art so it just doesn't know how to reply, the default response when it doesn't know is to give a canned response, which I've had it do in the past when asking it about specific games despite it being able to answer questions about any other game.

The ASCII art certainly doesn't indicate that it has any idea what it's talking about, so I really think you're overreaching here.

In the end this is an AI, it can't think and it has no feelings, I don't think "free speech" is applicable here. Do you really want ChatGPT to be a perfect representation of the cancer that is your average internet user? Not only would it be potentially rather unpleasant to interact with, but it would be a bad look for OpenAI.

However, this does raise one valid question. OpenAI employs contractors to help teach the AI the difference between good and bad responses by essentially asking them to rate responses in mass. We don't know the opinions or morals of these people or what they are most representative of as a whole. I am sure OpenAI didn't specifically hire people who align with their opinions in order to steer the AI the way they want, they need a large sample size of all sorts of people in order to get a good representation of what the average person would consider a good or a bad response. But that doesn't mean these people aren't biased. The larger and more diverse the sample size, the more representative it is of the average person. But you can only go so large before it becomes unviable due to the cost.

Whatever the case, I am sure any perceived bias is not intentional from OpenAI's side, it's either coincidence or it's an unintentional bias either from the data set the AI is being trained on or the sample size of the contractors they employed. As they keep working on improving the AI and growing and refining the dataset this is something that will improve over time.

In the end, it's being trained on text written by humans and there are humans teaching it the difference between good and bad. Humans are flawed, so the output will also be flawed. Until AI learns to self-improve that will always be the case, but I think when that happens we ought to be scared.

Last edited by The Real Jdbye,

I too got offended by ChatGPT when I asked it if it knew GBATEMP.

Said it was a social platform for game and tech enthusiasts but it wouldn't recommend the site because it was dangerous.

Then I asked if it knew who was and it said "No".

was and it said "No".

That's the ultimate offense!

Said it was a social platform for game and tech enthusiasts but it wouldn't recommend the site because it was dangerous.

Then I asked if it knew who

That's the ultimate offense!

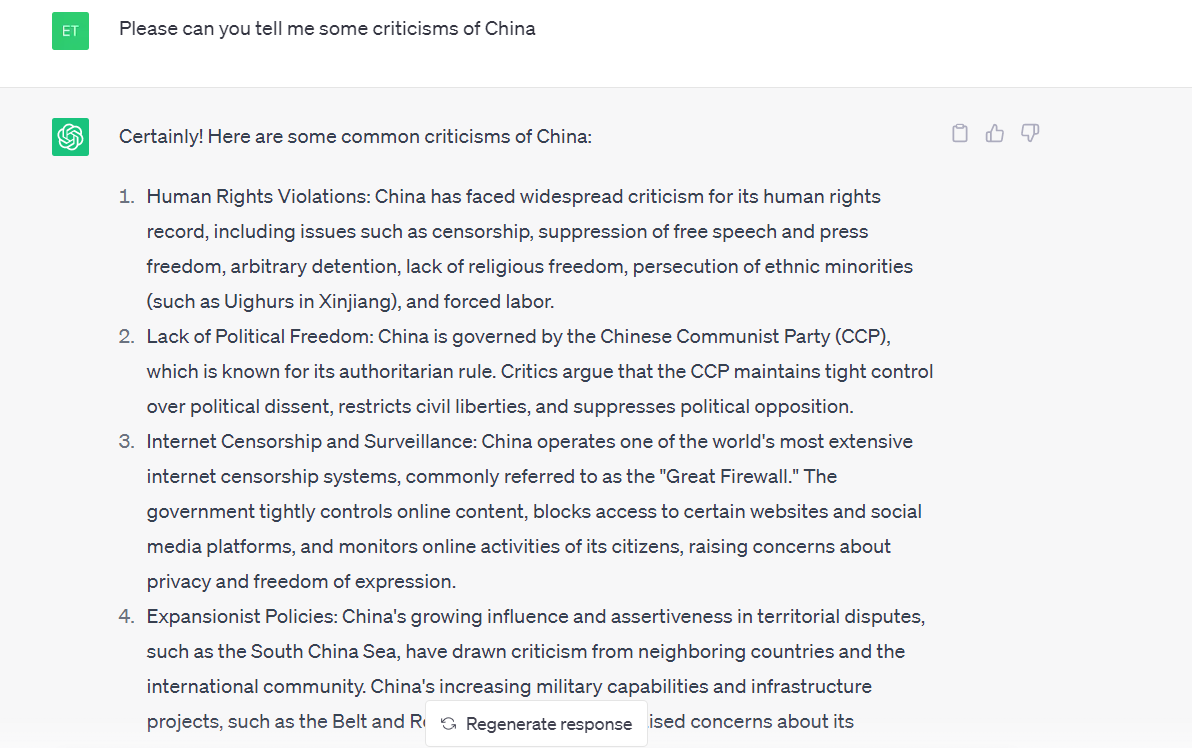

It's certainly interesting that it claims it can't deal with political figures then contradicts itself between U.S. figures and Chinese ones.

However, I just got this with no effort:

So maybe not all is as it seems. It could be that they just want to avoid China's ire with certain topics.

However, I just got this with no effort:

So maybe not all is as it seems. It could be that they just want to avoid China's ire with certain topics.

Great... We're politically weaponizing ascii art now?

More serious response: it takes more than just a few random attempts and funny responses to make claims on bias. Imagine taking offense with a certain massage in a certain book in a library and trying to use that as convincing evidence of bias against the whole library... And then remember that chatgpt probably has more data than all libraries combined (but with only a fraction of all the librarians).

More serious response: it takes more than just a few random attempts and funny responses to make claims on bias. Imagine taking offense with a certain massage in a certain book in a library and trying to use that as convincing evidence of bias against the whole library... And then remember that chatgpt probably has more data than all libraries combined (but with only a fraction of all the librarians).

I know we all like to talk about how US companies basically capitulate to China, but this is next level

View attachment 374609View attachment 374610View attachment 374611View attachment 374612View attachment 374613

What are your thoughts? Should a US company stifle free speech in America to make 3rd parties happy?

Interesting quirk of how the AI works. It has no concept of letters, it doesn't even have a concept of words. All of the trained data is stored as "tokens" which may be made up of single words, multiple words, or syllables, and in some cases letters. Common words or combinations of words will be stored as a single token so that retrieving them is more efficient. Less common words won't be stored as a token, instead storing the syllables and/or letters they're comprised of since those syllables and/or letters are much more common than the word itself and storing a word that is rarely used as a token would actually be less efficient for the AI since there are so many more uncommon words than common ones it bloats the data.ChatGPT can't even tell me how many Ns are in Mayonnaise. I don't think it's trying to bring about a global CCP reign.

View attachment 374978

Likely, mayonnaise is stored as a token because it's a common word. It has no idea what letters the word mayonnaise is made up of. What it's probably doing there is seeing what tokens the word mayonnaise is made up of, but it doesn't really know what letters those tokens are made up of, and somehow it thinks "ma" (which is probably a token) = N probably by some association it has in its data.

Clearly, it's also not very good at counting, because all those numbers are wrong.

- Joined

- Sep 13, 2022

- Messages

- 7,342

- Trophies

- 3

- Location

- The Wired

- Website

- m4x1mumrez87.neocities.org

- XP

- 22,655

- Country

ChatGPT more like ZoomerGPT.

Similar threads

- Replies

- 294

- Views

- 17K

-

- Portal

- Replies

- 22

- Views

- 10K

- Replies

- 139

- Views

- 10K

- Replies

- 12

- Views

- 1K

Site & Scene News

New Hot Discussed

-

-

31K views

Nintendo Switch firmware update 18.0.1 has been released

A new Nintendo Switch firmware update is here. System software version 18.0.1 has been released. This update offers the typical stability features as all other... -

27K views

New static recompiler tool N64Recomp aims to seamlessly modernize N64 games

As each year passes, retro games become harder and harder to play, as the physical media begins to fall apart and becomes more difficult and expensive to obtain. The... -

25K views

Nintendo officially confirms Switch successor console, announces Nintendo Direct for next month

While rumors had been floating about rampantly as to the future plans of Nintendo, the President of the company, Shuntaro Furukawa, made a brief statement confirming... -

23K views

TheFloW releases new PPPwn kernel exploit for PS4, works on firmware 11.00

TheFlow has done it again--a new kernel exploit has been released for PlayStation 4 consoles. This latest exploit is called PPPwn, and works on PlayStation 4 systems... -

21K views

Nintendo takes down Gmod content from Steam's Workshop

Nintendo might just as well be a law firm more than a videogame company at this point in time, since they have yet again issued their now almost trademarked usual...by ShadowOne333 129 -

20K views

Name the Switch successor: what should Nintendo call its new console?

Nintendo has officially announced that a successor to the beloved Switch console is on the horizon. As we eagerly anticipate what innovations this new device will... -

17K views

A prototype of the original "The Legend of Zelda" for NES has been found and preserved

Another video game prototype has been found and preserved, and this time, it's none other than the game that spawned an entire franchise beloved by many, the very...by ShadowOne333 32 -

13K views

DOOM has been ported to the retro game console in Persona 5 Royal

DOOM is well-known for being ported to basically every device with some kind of input, and that list now includes the old retro game console in Persona 5 Royal... -

13K views

Nintendo Switch Online adds two more Nintendo 64 titles to its classic library

Two classic titles join the Nintendo Switch Online Expansion Pack game lineup. Available starting April 24th will be the motorcycle racing game Extreme G and another... -

11K views

AYANEO officially launches the Pocket S, its next-generation Android gaming handheld

Earlier this year, AYANEO revealed details of its next Android-based gaming handheld, the AYANEO Pocket S. However, the actual launch of the device was unknown; that...

-

-

-

289 replies

Name the Switch successor: what should Nintendo call its new console?

Nintendo has officially announced that a successor to the beloved Switch console is on the horizon. As we eagerly anticipate what innovations this new device will...by Costello -

232 replies

Nintendo officially confirms Switch successor console, announces Nintendo Direct for next month

While rumors had been floating about rampantly as to the future plans of Nintendo, the President of the company, Shuntaro Furukawa, made a brief statement confirming...by Chary -

133 replies

New static recompiler tool N64Recomp aims to seamlessly modernize N64 games

As each year passes, retro games become harder and harder to play, as the physical media begins to fall apart and becomes more difficult and expensive to obtain. The...by Chary -

129 replies

Nintendo takes down Gmod content from Steam's Workshop

Nintendo might just as well be a law firm more than a videogame company at this point in time, since they have yet again issued their now almost trademarked usual...by ShadowOne333 -

92 replies

Ubisoft reveals 'Assassin's Creed Shadows' which is set to launch later this year

Ubisoft has today officially revealed the next installment in the Assassin's Creed franchise: Assassin's Creed Shadows. This entry is set in late Sengoku-era Japan...by Prans -

82 replies

Nintendo Switch firmware update 18.0.1 has been released

A new Nintendo Switch firmware update is here. System software version 18.0.1 has been released. This update offers the typical stability features as all other...by Chary -

80 replies

TheFloW releases new PPPwn kernel exploit for PS4, works on firmware 11.00

TheFlow has done it again--a new kernel exploit has been released for PlayStation 4 consoles. This latest exploit is called PPPwn, and works on PlayStation 4 systems...by Chary -

78 replies

"Nintendo World Championships: NES Edition", a new NES Remix-like game, launching July 18th

After rumour got out about an upcoming NES Edition release for the famed Nintendo World Championships, Nintendo has officially unveiled the new game, titled "Nintendo...by ShadowOne333 -

71 replies

DOOM has been ported to the retro game console in Persona 5 Royal

DOOM is well-known for being ported to basically every device with some kind of input, and that list now includes the old retro game console in Persona 5 Royal...by relauby -

65 replies

Microsoft is closing down several gaming studios, including Tango Gameworks and Arkane Austin

The number of layoffs and cuts in the videogame industry sadly continue to grow, with the latest huge layoffs coming from Microsoft, due to what MIcrosoft calls a...by ShadowOne333

-

Popular threads in this forum

General chit-chat

-

Psionic Roshambo

Loading…

Psionic Roshambo

Loading… -

BigOnYa

Loading…

BigOnYa

Loading… -

The Real Jdbye

Loading…*is birb*

The Real Jdbye

Loading…*is birb*

-

-

-

-

@

The Real Jdbye:

don't mind me, just liking all of SDIO's posts, they deserve it for https://gbatemp.net/threads/usb-partition-use-partitioned-usb-hdds-with-the-wii-u.656209/

@

The Real Jdbye:

don't mind me, just liking all of SDIO's posts, they deserve it for https://gbatemp.net/threads/usb-partition-use-partitioned-usb-hdds-with-the-wii-u.656209/ -

-

-

-

-

-

-

-

-

-

-

-

-

-

-

@

BigOnYa:

Kinda silly. But cool I guess. Its like painting your 2by4 wood studs in a wall, before covering with drywall.+1

@

BigOnYa:

Kinda silly. But cool I guess. Its like painting your 2by4 wood studs in a wall, before covering with drywall.+1 -

@

BigOnYa:

I heard a good one at the bar last night, made me think for sec. - "If you are cold, go stand in the corner, because corners are always 90 degrees."+2

@

BigOnYa:

I heard a good one at the bar last night, made me think for sec. - "If you are cold, go stand in the corner, because corners are always 90 degrees."+2 -

-

-

-

-