hello,

recently I've been trying to evaluate the performances of emulators, more specifically on the CPU part. Some benchmarks are available on the web for very specific instructions, and on the other end what FPS can be expected per game on a given emulator/hardware, but not much on the performances of typical workloads and a comparison with the equivalent built for the host architecture, so I thought I could setup a benchmark test and share my findings.

Emulators are notably hard on hardware, especially now that the gap between generation is reducing, at least on the single thread performances. And the democratization of SoCs where the performances are quite constrained push for more optimization.

How CPU emulators are working

When a software is built for a given target hardware, the binary follows a given ISA, ARM for the 3DS for instance. If we want to run this software on another architecture, typically X86_64, all the instructions must be translated in a form the host can understand.

The oldest (slower) CPUs are often emulated by an interpreter as simplier and easier to guarantee timing accuracy, emulation for more recent CPUs use Just In Time recompilers to generate equivalent CPU instructions on the fly, with as few overhead as possible.

Communication with the rest of the system depends on the platform, when running on bare metal (no OS) like the Wii, the software usually reads/writes directly from/to hardware registers mapped to known locations in memory, these read/writes must be intercepted to simulate the rest of the system. On platforms using a kernel like PS4/Switch or Linux, the software is running in user mode and issues supervisor calls to interact with the kernel, these are special instructions that must intercepted and the OS behaviour simulated.

Testing methodology

For the following we'll assume a host system in X86_64 (Intel i5-4590), and an emulated system in ARM 32 bits (raspberry pi, 3DS).

To be able to compare native binary with emulated binary on various emulators, we'll need to be able to compile a source code against the 2 architectures, so this will be a systhetic benchmark which will of course have its own biais but that we can control. The benchmarking algorithm are

The test is run under Ubuntu 18, using cross compilation:

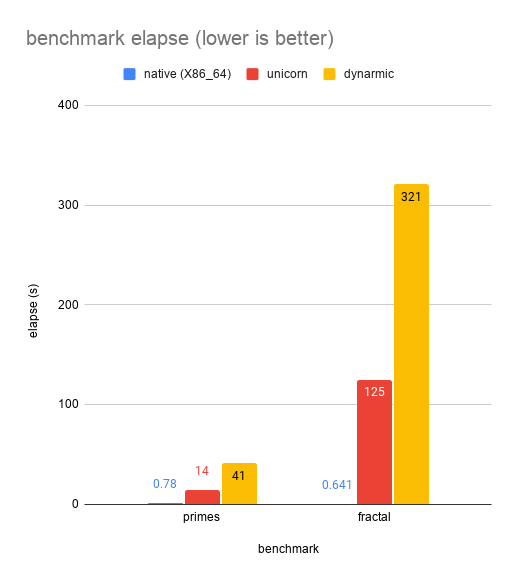

The tested emulators are Unicorn (CageTheUnicorn, Angr) and Dynarmic (Citra, Yuzu), both compiled in release mode from github master branch on 2021/03/01. The result numbers include the compilation time (JIT) however they are negligible compared to the run time due to the small size of the code.

Results

Conclusions

Even on a (relativelly) simple benchmark, dynamically recompiling emulators are still orders of magnitude slower than native code (18X to 195X for unicorn, 52X to 500X for dynarmic).

I was surprised to see that unicorn is significatly faster than dynarmic though, as I know Yuzu (ARM64) switched to dynarmic for better performance, if somebody is able to explain the discrepancy I would be curious to know.

Some loads such as floating point operations seems to have a bigger toll on the emulator than others, it would be interesting to have a deeper look at the trade-offs and architectural choices that have been made in the main emulators.

all sources can be found on https://github.com/vdwjeremy/jit-bench

recently I've been trying to evaluate the performances of emulators, more specifically on the CPU part. Some benchmarks are available on the web for very specific instructions, and on the other end what FPS can be expected per game on a given emulator/hardware, but not much on the performances of typical workloads and a comparison with the equivalent built for the host architecture, so I thought I could setup a benchmark test and share my findings.

Emulators are notably hard on hardware, especially now that the gap between generation is reducing, at least on the single thread performances. And the democratization of SoCs where the performances are quite constrained push for more optimization.

How CPU emulators are working

When a software is built for a given target hardware, the binary follows a given ISA, ARM for the 3DS for instance. If we want to run this software on another architecture, typically X86_64, all the instructions must be translated in a form the host can understand.

The oldest (slower) CPUs are often emulated by an interpreter as simplier and easier to guarantee timing accuracy, emulation for more recent CPUs use Just In Time recompilers to generate equivalent CPU instructions on the fly, with as few overhead as possible.

Communication with the rest of the system depends on the platform, when running on bare metal (no OS) like the Wii, the software usually reads/writes directly from/to hardware registers mapped to known locations in memory, these read/writes must be intercepted to simulate the rest of the system. On platforms using a kernel like PS4/Switch or Linux, the software is running in user mode and issues supervisor calls to interact with the kernel, these are special instructions that must intercepted and the OS behaviour simulated.

Testing methodology

For the following we'll assume a host system in X86_64 (Intel i5-4590), and an emulated system in ARM 32 bits (raspberry pi, 3DS).

To be able to compare native binary with emulated binary on various emulators, we'll need to be able to compile a source code against the 2 architectures, so this will be a systhetic benchmark which will of course have its own biais but that we can control. The benchmarking algorithm are

- a prime numbers finder: heavy integer operations

- a fractal image computation: heavy floating point operations and memory access

The test is run under Ubuntu 18, using cross compilation:

- tester.cpp -- g++ --> tester_x86

- tester.cpp -- arm-linux-gnueabi-g++ --> tester_arm

- no dynamic allocation on heap, the program must use only the stack

- no input/output as part of the benchmark loop

- isolate the benchmark algorithm in a separate function, OS related task (initialization, input, output) must be kept in __libc_start_main/main, this separate function will be the entry point for the emulator

The tested emulators are Unicorn (CageTheUnicorn, Angr) and Dynarmic (Citra, Yuzu), both compiled in release mode from github master branch on 2021/03/01. The result numbers include the compilation time (JIT) however they are negligible compared to the run time due to the small size of the code.

Results

Conclusions

Even on a (relativelly) simple benchmark, dynamically recompiling emulators are still orders of magnitude slower than native code (18X to 195X for unicorn, 52X to 500X for dynarmic).

I was surprised to see that unicorn is significatly faster than dynarmic though, as I know Yuzu (ARM64) switched to dynarmic for better performance, if somebody is able to explain the discrepancy I would be curious to know.

Some loads such as floating point operations seems to have a bigger toll on the emulator than others, it would be interesting to have a deeper look at the trade-offs and architectural choices that have been made in the main emulators.

all sources can be found on https://github.com/vdwjeremy/jit-bench